Introduction

In the ever-evolving landscape of web applications and services, ensuring seamless user experiences, high availability, and optimal performance are critical. Load balancers have emerged as indispensable tools for achieving these goals. In this blog, we will dive into the world of load balancers, understanding their role, types, benefits, and best practices for implementation.

What is a Load Balancers?

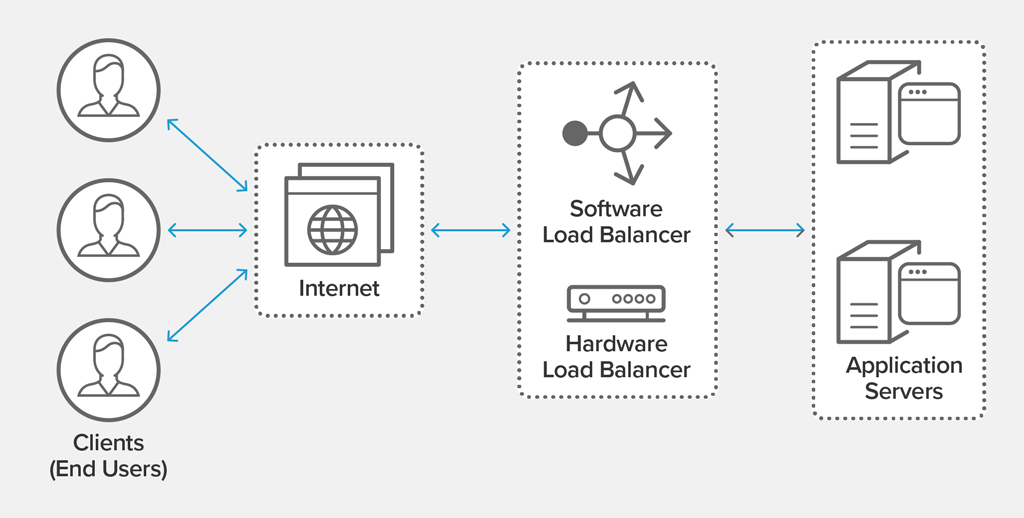

A load balancer acts as a mediator between incoming client requests and backend servers, distributing traffic evenly across multiple servers. Balancing the workload, prevents any single server from becoming overwhelmed, ensuring resource utilization is maximized and response times are minimized.

Modern high‑traffic websites must serve hundreds of thousands, if not millions, of concurrent requests from users or clients and return the correct text, images, video, or application data, all in a fast and reliable manner. To cost‑effectively scale to meet these high volumes, modern computing best practice generally requires adding more servers.

Types of Load Balancers

There are several types of load balancers based on the need of your application:

Local Load Balancers

- Designed for distributing traffic within a data center or a localized network.

- Commonly used for optimizing internal application performance.

Global Load Balancers

- Responsible for distributing traffic across multiple data centers in different geographical regions.

- Enhance high availability and disaster recovery capabilities.

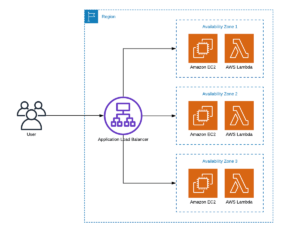

Application Load Balancers

- Operate at the application layer (Layer 7) of the OSI model.

- Can make intelligent decisions based on content, cookies, and other application-specific data.

Network Load Balancers

- Function at the transport layer (Layer 4) of the OSI model.

- Ideal for handling TCP and UDP traffic efficiently.

Benefits of Load Balancers

- Scalability: Load balancers facilitate horizontal scaling by adding more servers to handle increased traffic without affecting performance.

- High Availability: By distributing traffic across multiple servers, load balancers ensure that if one server fails, others continue to handle requests.

- Improved Performance: With even distribution, response times reduce, leading to faster and more reliable user experiences.

- Traffic Management: Load balancers enable intelligent traffic routing, allowing administrators to prioritize certain servers for specific tasks.

Load Balancers Algorithms

Load Balancers use various algorithms to make sure that all areas experience a smooth and balanced flow of traffic, including:

- Round Robin: Requests are distributed sequentially to each server.

- Least Connections: Requests distributes based on the fewest active connections to the server.

- Lead Time: Send requests to the server selected by a formula that combines the fastest response time and fewest active connections.

- Hash: Distributes requests based on the key you decide, such as client IP Address, Request URL, etc.

- IP Hash: Based on the client’s IP address request sent to the same server.

- Random with Two Choices: Send requests between two servers on the choice of the randomly selected of them.

Best Practices for Load Balancers Implementation

- Redundancy: Deploy multiple load balancers to avoid single points of failure.

- Health Checks: Regularly monitor backend servers to ensure they are operational and healthy.

- Session Persistence: Employ sticky sessions to maintain user session data on a specific server for certain scenarios.

- Security: Implement security measures to protect against DDoS attacks and other threats.

Conclusion

Load balancers play a crucial role in modern web architecture by enhancing scalability, performance, and reliability. They empower organizations to deliver seamless user experiences, tackle increased traffic, and ensure high availability. By understanding the different types, benefits, and best practices for implementation, businesses can leverage load balancers effectively to meet the demands of today’s dynamic digital landscape.

[…] to the request applications server. This request transmission works on various factors, such as load-balancing algorithms, server health, geographic location, etc. After sending, it read the response from the server and […]

[…] Classic Load Balancer is ideal for applications that require simple load balancing, such as those built on the […]

[…] load balancer acts as a gateway for incoming database requests. It receives the requests from clients and […]